Shader Test

Language: C++, Middleware: OpenGL, GLSL

Overview of the project

Motivation

- How shaders communicate with OpenGl in the rendering pipeline.

Problem which I had

- 3D geomtry data, such as vertices, vertex normals and colours, needs to be transferred to shaders. Also, modelview and perspective matrix should be considered.

Which features are included in this project

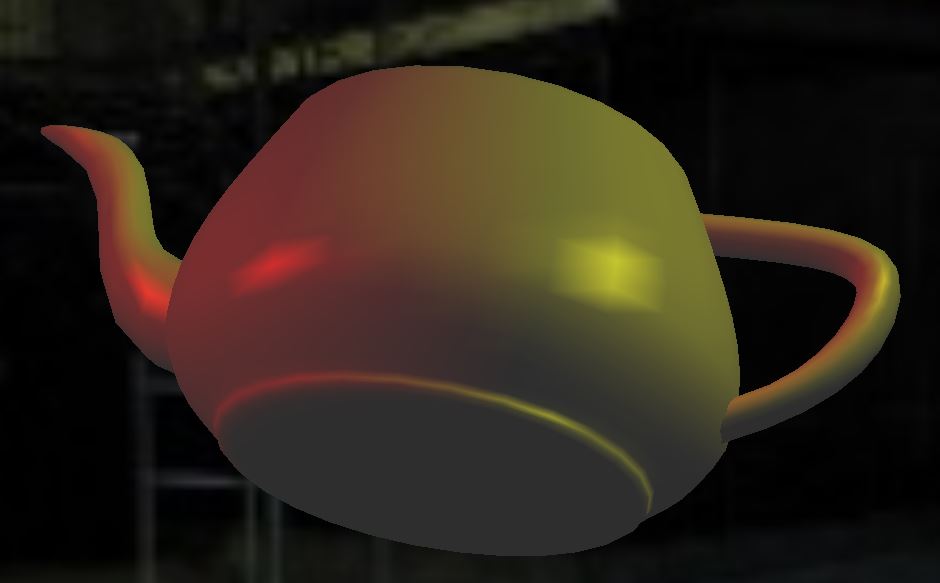

- Gouraud and Phong shading

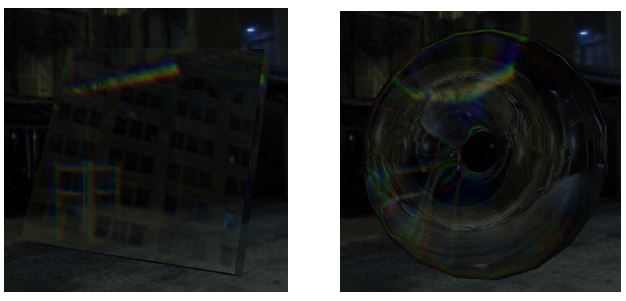

- Environmental mapping (Cube mapping)

- Reflection and refraction

Detail of the project

1.Introduction

This project is simple test of shader to learn data transfer between vertex and fragment shaders. Also, it aims to understand OpenGL pipeline. In this project, Gouraud, Phong shading and environment mapping have been considered.

2.Gouraud and Phong shading

In order to render 3D object in Computer Graphics, we need to consider normal of the surface, the location of lights and camera, and material of object. The colour of pixels in the screen can be decided by ambient, diffuse and specular colours. So the colour of the pixel is determined by the combination of these three colours and this can be written as below.

Gouraud shading can be called as flat shading and the colour inside of polygon is decided by the colour of its vertices.

The advantage of Gouraud shading is that it is fairly cheap to compute, but it cannot render accurate highlight.

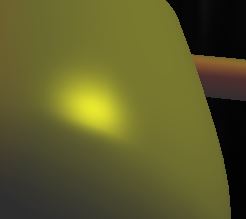

In Phong shading, normal of the surface is interpolated rather than colour based on normal of the vertices of the polygon and colour of the pixel is computed in fragment shader so that it can simulate more accurate highlight for the object.

In Phong shading, normal of the surface is interpolated rather than colour based on normal of the vertices of the polygon and colour of the pixel is computed in fragment shader so that it can simulate more accurate highlight for the object.

3.Environment Mapping (Reflection)

Environment mapping approximates the appearance of reflective material using precomputed texture image. The surface of objects is regarded as reflective and each point on the object rendered by texture surrounding the object. We assume the world is surrounded by the textures below.

In other words, it is mapping surrounding environment itself rather than mapping the texture. So the image drawn on the object keeps changing depending on viewing angle. This is specified in the following code, which is the part of fragment shader.

According to this code, the direction of reflection is decided by the direction of the eye (camera) and surface normal. Using this direction, GPU looks up the pixel information from the cube map.

The result is shown in below. Only cube map is applied to the image on the left and lighting has been applied on the right.

4.Refraction

Refraction occurs when the light passes through different material having different density. This phenomenon is defined by Fresnel's equation. In GLSL, there is built-in function for this equation.

Eta is the ratio of density between different materials. In my implementation, Eta is between air and glass.

In fragment shader, we can decide how much light is reflected or refracted.

In this case, 80% light will be refracted and 20% will be reflected.

Also, we can control different refraction according to different wavelength (RGB).